Using AI for Quality (in Smart Contract Auditing)

Speed is not just for speed.

The selling point of AI so far has been speed: prototype faster, maximize output, create efficiencies. And the implication of that narrative is an assumed loss of quality often encapsulated in the world slop.

We are completing an internal audit right now on a client project and discovered something that contradicts the speed story entirely.

Auditing is, by nature, a quality problem. If you have to choose between a human who takes two weeks to find 50 bugs and an AI that takes five minutes to find 30, you would pick the human every time.

Coverage > speed.

And yet, through careful experimentation, we are finding ways that AI actually improves the quality of our audit outcomes, not just the pace.

We’ve written before about the value of internal audits. Smart contracts need to be correct and external audits are essential, But the best way to maximize the value of those external audits is to run a rigorous internal audit first.

Our team reviews code, creates intermediate deliverables, and follows the same type of process an auditing firm would. Until now, our toolchain has been traditional: static analysis, visualization tools, and a structured multi-lens review process.

This time, we are also bringing models into the mix.

LLMs find bugs but NOT all of them

Our bug bounty program has been inundated with AI-generated vulnerability submissions. All of them have been false positives.

Security “researchers” point automated tools at code and submit it without review.

But when we turned the same class of tools on previously unaudited code ourselves, the results were different. The latest generation of models (5.4/4.6), combined with open source auditing platforms, genuinely find actionable issues.

They’re really good at vulnerabilities that are fairly evident from the code itself, things that don’t require a deep understanding of the trust model, the interplay with external contracts, or the assumptions baked into dependencies.

For that category of bug, though, the leap in capability is real, and the ability to generate proof-of-concept exploits alongside findings makes the output immediately useful.

Many firms right now are racing to fully automate bug finding.

But that will always be a narrow approach, because the hardest vulnerabilities to catch come from understanding how contracts will be used, what external integrations assume, and where the trust boundaries sit. Models are still weak here.

Trust the PROCESS

To work around limitations of AI, the right process is important. Here’s the risk: if you already have a manual review process, consider a step where each auditor is required to read the code line by line.

You find an AI tool that claims to also do line-by-line review. The temptation is obvious. Could you swap the human step for the AI step and save hours?

I’m strongly opposed to this. The guiding principle with smart contract security should be a kind of deep paranoia. If there’s any additional step you can take to secure the code, you should generally just take it.

Replacing a human step with an AI step means you might be losing coverage in ways you can’t easily measure.

AI is best used for adding process steps, not removing them. Running an AI tool is usually just a question of token cost. Treat the output as another input. But be very conservative about dropping anything humans were already doing well.

Where to use AI for auditing RIGHT NOW

The areas where AI genuinely helps are less obvious than “find all the bugs”.

Threat model generation. We have a structured threat modeling process that we’ve refined over several internal audits. We built a custom prompt that pre-generates a comprehensive threat model, and a human then reviews and corrects it. The AI-generated version is actually more comprehensive than what we produced manually. It may not be 100% as accurate on every point, but combined with human review, the final output is better. We save time and improve quality. We plan to publish the skill soon for other teams to use.

Proof-of-concept writing. When you find a potential vulnerability, a POC (Proof-of-Concept) test case verifies it’s real. Writing POCs has always been time-consuming for auditors, and it’s fundamentally a software-writing problem, which is exactly where LLMs shine. Our team now generates automatic POCs for most audit findings with a human review pass on top.

Conceptual visualizations. There’s a gap between diagrams that can be auto-generated from code (call graphs, inheritance trees) and the semantic diagrams good auditors create, the ones that involve taste and simplification to manage information density. A good auditor diagram might simplify certain nodes, delete less relevant links, or emphasize particular actors to make the picture maximally useful. Models are getting good at this kind of work, especially when paired with mermaid diagram tools. We’re exploring automating this step because it has historically been very time-consuming to do manually.

Checklist enforcement. Security checklists exist for common vulnerability patterns, and AI can be largely trusted to run through them systematically. The caveat: line-by-line review isn’t just checklist execution. In our process, when auditors review a line, they check it against the checklist and reason about it independently. An interesting tool idea would let you walk through code line by line with AI flagging checklist violations in real time, making the human review faster without replacing it.

Pattern-matching across a codebase. When you find one instance of a vulnerability, AI is excellent at finding every other instance of the same pattern. I’ve used this since last year with AI-based auditing, but the new generation of models can now handle more general types of vulnerabilities beyond simple syntactic matches.

Invariant proving. We’re experimenting with having AI prove a comprehensive set of invariants based on the code. For a big enough codebase in a tight enough audit window, the human time investment for formal invariant checking is hard to justify. But it’s tractable with AI. I can imagine full formal verification becoming very accessible this way.

Adversarial simulation. Some of the best auditors like to deploy contracts and just poke at them with transactions. This is a fundamentally different process from reading code, closer to sophisticated fuzzing. I haven’t seen AI applied here yet, but it could be promising: feed the model the code to build intuition about what to try, then let it probe the deployed contract for unexpected behaviors. Worth exploring.

Feedback loops. When an external auditing firm finds issues we missed, AI can help analyze whether the gap was human error or a missing process step. This is important because the value of an external audit isn’t just catching remaining bugs. The real value is that it audits your internal auditing team. Every miss is a signal about where your process has holes, and AI can help systematize that learning so the holes get filled for next time.

Don’t let AI distract from process discipline. As AI takes on more of the audit workload, the human’s job is to be both a great auditor and a great orchestrator. The most helpful tool the human has is a great process. As AI speeds up certain steps, it means you can afford to add more. Evolve the process. Experiment with new steps.

Change of reference frame. I’ve been rereading Rick Rubin’s The Creative Act and one idea keeps coming back: a change of environment or reference frame can facilitate creativity. The same is true for auditing. AI doesn’t need to find all the bugs or do all the work. It just needs to help you look at the code differently and that may just surface issues you would have otherwise missed.

These are just some of the examples of which I'm sure there will be many more.

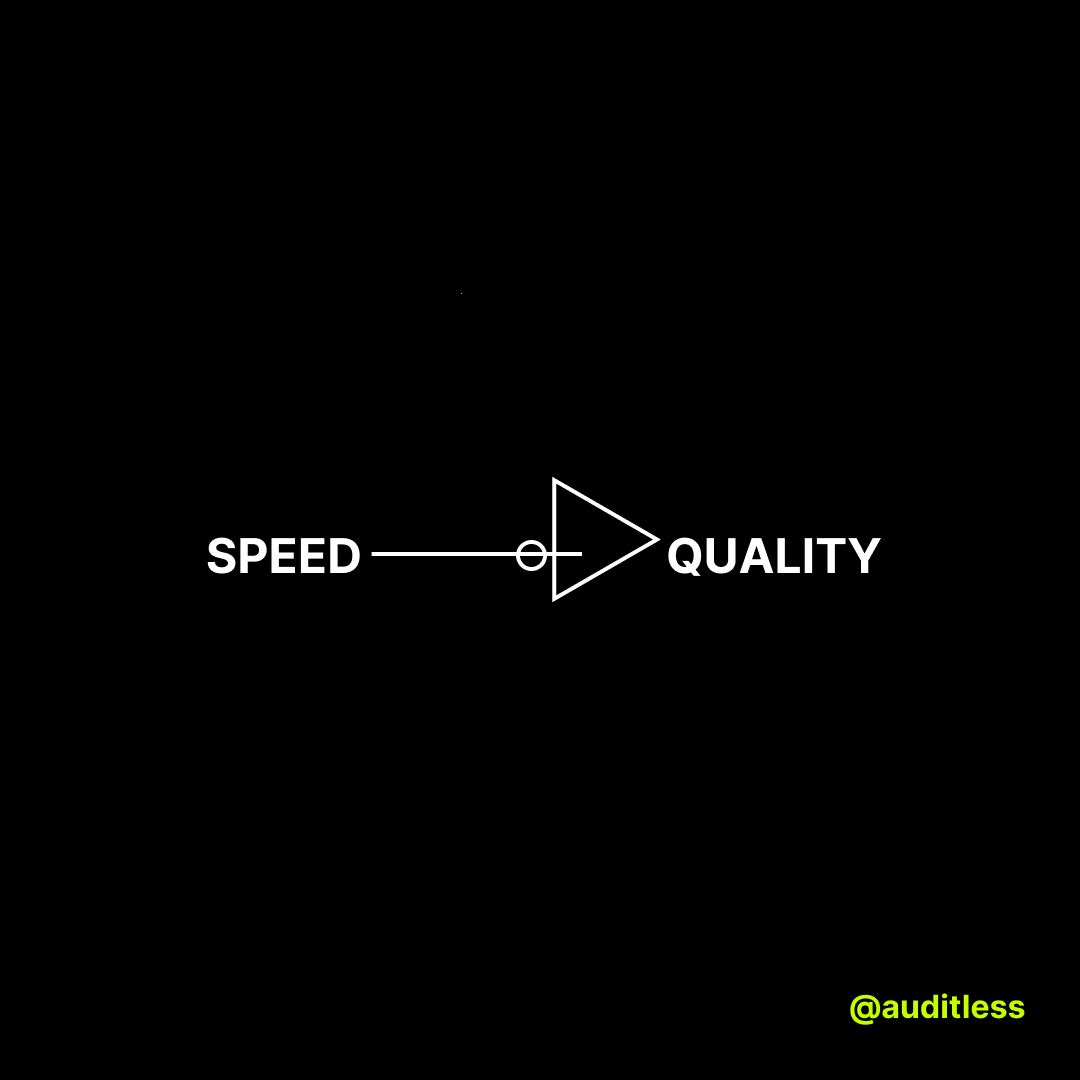

SPEED → QUALITY

The reason this all works isn’t because AI makes auditing faster (though it does). It’s because speed, applied strategically, converts into quality.

First, by automating mundane work, you free human attention for the parts that actually need deep reasoning. When AI handles threat model generation, checklist enforcement, and POC writing, auditors can spend their time on the hard problems: trust model analysis, dependency assumptions, cross-contract interaction risks.

Second, speed lets you do things that humans could never justify doing. Proving a full set of invariants across a large codebase in a short audit window was simply not feasible before. Now it is. AI doesn’t just speed up existing steps; it makes entirely new steps possible. That’s a direct coverage gain.

Third, AI is consistent in a way humans aren’t. We’ve noticed internally that excitement about following the process fades over time. Humans get bored of threat models. They start questioning whether a step is worth the effort. AI has no such drift. Ask it to run a threat model three months from now and it’ll do it at least as well as today, probably better as models improve. That consistency is itself a quality advantage.

This applies well beyond smart contract security. The instinct is to keep AI at arm’s length when it comes to quality. But speed and quality aren’t opposed if you’re thoughtful about where the speed goes. Reclaim human attention for deep work. Make previously impossible steps possible. Maintain consistency where humans can’t.