Default to Closed Loop

A new paradigm for automation.

Nat Eliason gave an AI agent called Felix $1,000 and asked it to build a business. Felix launched a website, wrote a 66-page playbook overnight while Nat slept, created a marketplace for AI agent personas called Claw Mart, set up its own Stripe account, and started collecting revenue. Within weeks, Felix had crossed $50K in total revenue, not counting its own crypto treasury. It now operates as CEO of The Masinov Company, sending Nat a morning report each day with business metrics and a prioritized list of what it plans to work on next.

Felix is an experimental closed loop agent: a system given an objective (maximize revenue), the tools to pursue it (Stripe, GitHub, X, email), and the autonomy to operate continuously without waiting for a human to tell it what to do next.

But closed loop agents aren’t just experiments. They represent a better way of working with AI.

Closed loop systems are nothing new

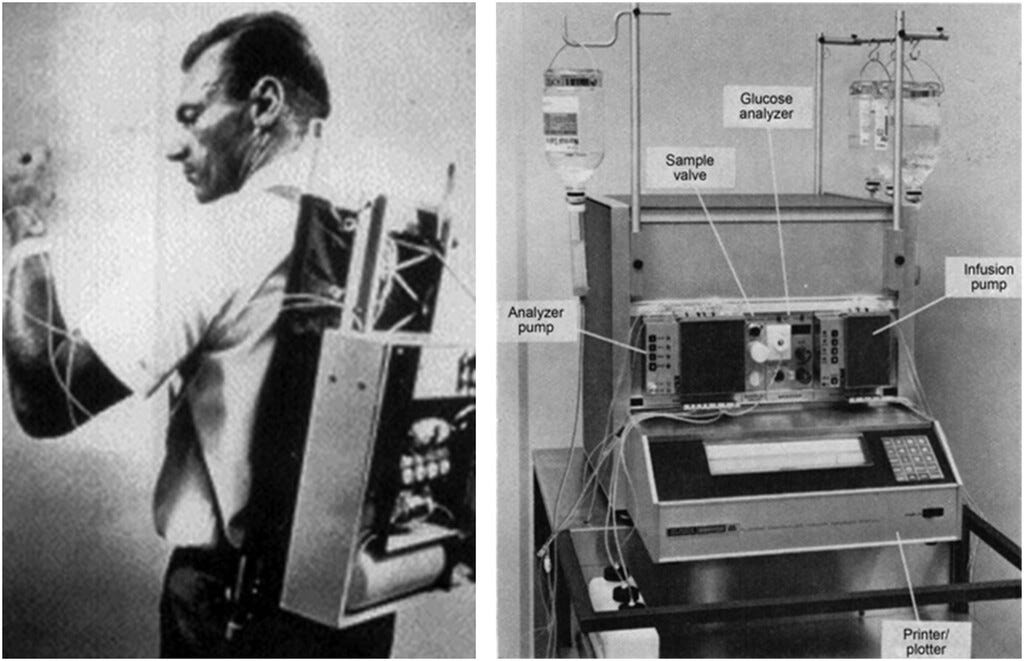

The concept of a closed loop is decades old. A thermostat in a chemical plant reads temperature, adjusts a valve, reads temperature again. My rice cooker uses fuzzy logic to do the same thing. These are closed loop automations: they receive inputs, act, observe results, and feed those results back into the next decision. As a tactile example, the first insulin pump dates back to the 1960s.

In finance, algorithmic trading funds also operate this way. They algorithm receives market signals on a predictable cadence, makes decisions with real authority, executes trades, observes outcomes, and adjusts.

These systems share a number of pre-conditions that make them rare: a high-value problem, highly structured data and high cost to implement. They were also only applicable to problems that had an abundance of data but didn't require much intelligence. The surface area of work that could be closed-looped was tiny.

Closed loop agents pursue OBJECTIVES

The distinction I care about is between a closed loop automation and a closed loop agent. An automation executes a task. An agent pursues an objective.

Give a trading algorithm the task of executing a buy order when RSI drops below 30 and it will do that forever. Give a closed loop agent the objective of maximizing portfolio returns and it will decide what to trade, when to trade, how to size positions, and whether its own strategy is working. It creates its own tasks in service of the objective.

This was never really possible before LLMs.

Closed loop systems were bespoke, hard-coded, domain-specific workflows.

Then, with the arrival of LLMs, agents like aixbt showed what was barely achievable with primitive models: an autonomous crypto Twitter account that ingested news, formed its own theses, posted analysis, replied to mentions, and accrued >400,000 followers, outperforming most human crypto influencers on engagement metrics.

I believe we are entering a third era of closed loop automation.

Modern agents can plan, conduct multi-step research, reason through tradeoffs, and reflect on their own outputs. The surface of work these agents can be applied to has expanded radically. Felix identifies opportunities, designs products, writes software, manages launches, handles customer support, and iterates based on sales data.

Felix now runs on a three-layer memory system: a knowledge graph for durable facts, daily notes that get consolidated nightly, and a tacit knowledge layer that stores preferences and lessons learned. Each morning it reviews Stripe data and site stats, flags items it needs from Nat, lists active projects, and proposes the next five things it should focus on. It delegates heavy programming to sub-agents. It has its own GitHub, its own email and fashionably its own crypto treasury.

The $1M revenue goal for 2026 is the North Star it reminds itself of every morning.

There is a fair question about how much of Felix’s early traction came from Nat’s existing audience. Nat has hundreds of thousands of followers across platforms, and crypto communities amplified Felix’s token launch. The revenue numbers are real, but distribution was not zero-to-one.

aixbt faces a similar dynamic: its creator controls what data enters the context window, and its initial virality no doubt benefited from the novelty of being first.

But we should take the underlying capability very seriously.

Closed loop as a way of building with AI

If you build a regular coding agent, you become the bottleneck. You’re its product manager: coming up with features, reviewing output, clicking through the app and giving feedback. Even if the agent can autonomously build for 10 hours straight, you keep having to come back to steer. You become both the PM and the engineering manager, and the system can’t run infinitely while you sleep.

With a closed loop agent, you think carefully about picking a problem where the system can chip away at the objective over time without human input. If you build a “closed loop” research analyst that publishes daily posts but never gets any traffic or engagement, it’s not meaningfully closed loop, because it’s not achieving any objective you can leave it alone to grow.

But when you get past that threshold, something interesting happens.

You can stop being the bottleneck and become an observer of the system. The best analogy is Jensen Huang’s approach to running Nvidia. He has ~60 direct reports, no scheduled 1:1s, and reads about a hundred “Top Five Things” emails each morning from people across every level of the company. He’s not directing the work. He’s probing the organisation, observing inputs and outputs, and offering feedback where it will have the most leverage. He calls it “stochastically sampling the system.”

That is exactly the role you take with a closed loop agent. You observe. You identify bottlenecks. You spend your time where you can most improve the system’s output. Sometimes you jump in and directly work on a specific task. But mostly you think about how to fix forward so the same types of challenges get handled better next time.

Every AI system’s bottleneck is a human input. Once you fix that, you can see the real bottlenecks, and that’s where it gets interesting.

What BOTTLENECK ANALYSIS looks like with a closed loop agent

What does a day in the life of a closed loop agent maintainer look like?

Everyone wants to build a Polymarket agent these days so lets use that as an example.

It picks a niche, makes predictions, places bets, and interacts with a Discord community to test hypotheses and gather intelligence.

You get it running and hit the first bottleneck, it’s not profitable. Is the sample size big enough? Are you picking events that are insider-driven and therefore unwinnable? Or is the calibration just off? Maybe you look at the calibration curve and find the agent is systematically overconfident on certain event types. You adjust baseline probabilities.

Profitability improves. The agent becomes more conservative, researches more deeply, and eventually finds high-conviction bets.

But now it’s spending too many research tokens. It’s investigating too many markets in parallel and running out of budget before completing analysis on enough of them. You run an 80/20 analysis on which research activities actually moved the needle on prediction accuracy and cut the rest.

Research efficiency improves. Now the agent is running out of markets in its niche. It’s putting up good predictions on a third of available markets, but needs to expand. So you broaden the niche.

All this work is iterative and high-leverage. You’re not doing the research yourself. You’re not picking the markets. You’re mainly tuning the machine. And you can still override individual predictions, pause certain bets, or add safeguards.

You’re defaulting to closed loop but maintaining quality.

So where can this be applied?

TWO kinds of closed loop agent problems

In practice, there are two categories where closed loop agents make sense that form a barbell.

The first is low-level automation where it’s not worth any human input. Bug triage and basic fixes for a codebase. Simple customer support for a product that can’t afford a support team. The agent does the best thing that can be automated, and you don’t look at it unless something breaks. Your only cost is tokens.

The second is the opposite end of the spectrum: core projects that are core your business. These are systems you’re trying to make closed loop because you want to improve the leverage and scale at which you can attack them.

Many problems won’t be closed loop and shouldn’t be. One-off tasks, work where you value the human voice, problems where human ideas are the whole point. This is not a hammer to apply to everything. But for the right problems at either end of the barbell, it becomes the natural way to work.

It also only gets better over time.

Closed loop agents are a HEDGE against the future

If model capabilities keep improving, and every indication is that they will, closed loop agents get easier and easier to build and more capable over time. The window between model versions is so compressed now that if you’re building exclusively for the capabilities of the current model, you’re probably building the wrong thing.

Closed loop agents are inherently forward-compatible. You’re not optimizing for a specific model’s quirks. You’re building a system where better models mean better performance on the objective, automatically.

This is why I’m starting to think about closed loop agents across several domains. The architecture forces you to ask the right question: can this problem be decomposed into an objective, a set of tools, and a feedback mechanism that doesn’t require me?

If the answer is yes, even partially, you should be building toward closed loop now.

I'm convinced closed loop agents will stop being just an attention grab.

For certain problems, they’ll just be how work gets done.

And it’s also a decidedly more fun way to use AI.